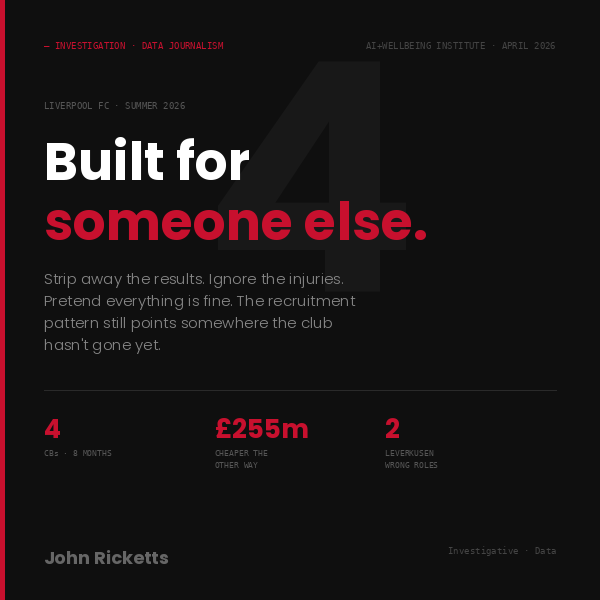

The data points somewhere Anfield hasn't gone. And getting there costs £255m less than staying where they are.

Strip away the bad season. Ignore the injuries. The data shows Liverpool have quietly assembled a squad that doesn't fit the manager in the dugout — and fits perfectly the one who isn't. Four centre-backs in eight months. Two Leverkusen players in the wrong roles. A star player released a year early from a contract just signed. The pattern is unmistakable. And fixing it the hard way costs £255m more.

The Hidden Logic of Polycrisis

Human systems need rich signals to stay healthy. Late modernity is narrowing those signals across mind, language, economy, and governance, producing brittleness where resilience should be.

This paper calls that pattern the monoculture problem: optimisation that removes the diversity complex systems depend on. Its claim is simple: these crises are connected, and the remedy is to restore the range human systems need to adapt and flourish.

Late modernity both overstimulates & starves. So what to do about it?

You are running on 3 chemicals. The full system has over 100. This deck maps what modernity overstimulates — and what it starves.

Without New Data, the Search for Life May Never find an answer …

This paper argues that astrobiology needs a new data strategy. When orthodox channels are high-cost, high-latency, and weakly updating, alternative evidence becomes a rational priority.

In most writing, meaning narrows. In Finnegans Wake, meaning multiplies.

Most books try to communicate clearly. Finnegans Wake does something stranger: it keeps generating meaning faster than we can close it down.

Our Research uncovers it's structural singularity & asks whether that structure comes from an extraordinary density of meaning.

Designing Self-Playing Systems

Cybernetics matters because it describes how the world actually works: through feedback, adaptation, memory, and self-organisation. These dynamics shape living systems, machines, ecologies, economies, and AI. In this work, cybernetics becomes audible. Rather than composing notes or performing gestures, we design circuits that listen, respond, and play themselves.

More human than human?

Why do language models feel creative at all? This paper argues that the answer may lie not in seamless global understanding, but in the local patterns of human language.

Language may be less about saying more, than about saying enough.

Why do very different languages seem to converge on a similar rate of communication? A common answer is that humans are hitting a hard cognitive ceiling. Our new paper argues something subtler: language is not optimized for maximum throughput, but for shared understanding under real-world conditions of noise, ambiguity, memory, and repair.

We Speak Through Shared Worlds: A Rate–Distortion View of Human Language proposes that communication works inside a context-adaptive regime of collaborative compression. Shared history, expertise, culture, and common ground are not just background to communication, they are part of its compression machinery.

Sleep as a Specific Computational Process

We spend a third of our lives asleep, and science has long known sleep is essential for memory. But a new theory goes further: sleep doesn't just consolidate what you learned — it actively edits it, pruning away the noise and preserving what's most connected and meaningful. We've built a formal model of this process, and it turns out the brain may be solving the same kind of optimisation problem that underlies compression in information systems. What that means for how we think about learning, rest, and cognitive wellbeing is only beginning to come into focus.

We Know How to Make AI Safer. So Why Don't We?

Provenance markers. Uncertainty signals. Targeted refusals. The tools exist. They're not being built because they slow things down, reduce engagement, and hurt the metrics that matter to the companies deploying AI.

The problem isn't inside the model. It's around it.

Safety Beyond the Model — new research from the AI+Wellbeing Institute — argues that AI safety is an institutional design problem as much as a technical one. Read the paper

The Global Wellbeing Observatory

The Wellbeing Observatory is an ongoing research Project at the AI+Wellbeing Institute. Based upon a novel approach to quantifying contribution or detracts from wellbeing.

This interactive global dashboard that visualizes “wellbeing efficiency” - the extent to which economies support or detract from wellbeing - across countries from 1970–2024.

Jean Robin, Jean-Paul Duthieuw Leynaick

Policy failures from climate shocks to pandemic mismanagement reveal the inadequacy of static, prediction based governance.

Research from ICLA, University of Tokyo & the University of Melbourne shows a straight forward, way forward.

GDP has surged, but wellbeing has not kept pace. This research shows that what matters most may not be how much an economy grows, but how it allocates its resources.

Global GDP has tripled since 1970, yet life satisfaction in wealthy nations has not increased. This disconnect—the Easterlin paradox—reflects a robust empirical pattern: wellbeing rises with income but with sharply diminishing returns. Early income gains secure basic capabilities; later gains increasingly fund status competition, complexity and defensive spending. Here we show that what matters for national wellbeing is not how much an economy spends, but how it allocates that spending.

Psychological Correlates of Authoritarianism: An Interactive Educational Model

This simulation provides an interactive exploration of the empirical relationships between personality, cognition, and authoritarian attitudes as established in the peer-reviewed literature. It is designed for educational use in courses covering political psychology, personality research, and social cognition.

Value Creator 2035

AI Won’t Lead … But your People Will

Value Creator 2035: Who Will Lead in the Age of AI?

Chris Lowndes, AI lead for Accenture & John Ricketts hold a series of workshops & discussions with ICLA students. Here we introduce the Value Creator 2035 blueprint — five human competencies our curriculum is engineered to build, and why they matter for your future talent pipeline.

Watch the videos, read the findings.

Get ready for the future … that’s where you’ll spend the rest of your Life !

(Losing &) Finding Purpose in the Age of AI

This short documentary follows my personal journey of losing and rediscovering purpose in the age of AI.

What started as a simple project turned into a deeper search for joy, identity, and meaning. With help from my professor, the Ikigai model, and honest answers from my family, I began to see myself in a new way.

In a world where AI can do many of the “easy” things, this video explores what still makes us human, and why our purpose might be closer than we think.

Best regards, Lukas Juul Hansen, Exchange Student from Denmark Student ID: 2508860

Invisible Work Matters

Invisible Work? MATTERS.

A CAMPAIGN TO MAKE CARE WORK VISIBLE

Using AI-assisted erasure techniques, this campaign removes caregivers from iconic photographs of care work. What remains is the evidence of labor: the bathed child, the swept floor, the tended home, the cared-for elder.

The work is visible. The workers are not.

By making economic erasure literal—by showing the gap, the void, the absence—we ask: Who’s missing from our economy? And what happens when we refuse to see them? Anna Revesz

The BAD/AI+ Wellbeing develop & implement community wellbeing.

Urban & Rural Development in NSW, Australia

Locally driven, culture based regeneration.

Brookvale Arts District (BAD) demonstrates how a district-based approach can transform fragmented local energy into a coherent, culturally vibrant and economically active precinct - through coordination, not control.

Before BAD, Brookvale comprised creative studios, breweries, light industrial businesses and emerging cultural groups operating independently. Activity was strong, but lacked visibility, shared identity and a mechanism to collaborate. BAD introduced a lightweight district layer-shared narrative, simple coordination and clear communication - shaped with the community, It amplified what already existed rather than replacing it.

Once established, momentum grew through continuity: Brookvale evolved into a recognised cultural destination with a stronger night-time economy, more frequent cultural programming and growing cross-industry collaboration. BAD also became a clearer point of engagement for government and partners- improving delivery confidence and investment readiness.

AI Safety: LiberalArts Approach

Liberal Arts equips us to approach AI safety as a ‘human systems’ problem, not a purely technical one. In practice, the risks that matter most—misaligned incentives, opaque institutions, cultural blind spots, brittle governance, and unintended social consequences—emerge at the boundary between code and society. A siloed viewpoint can optimize one layer (model performance, legal compliance, or ethics statements) while missing how the whole system behaves when deployed at scale. By contrast, liberal arts training builds the habit of integrating ways of knowing: empirical evidence and statistical reasoning, philosophical clarity about values and responsibility, historical awareness of how technologies reshape power, and communicative skill for building legitimacy across stakeholders. That integrative capacity doesn’t replace technical expertise—it makes it safer—because it helps us ask the right questions early, notice second-order effects, and design AI that is trustworthy not only in the lab, but in the lived reality of diverse communities.

ICLA Students Build Tomorrow

“2028-2032: Breaking Point …This is when normal ends. Societies will face a redefinition of labor, trust, & even value”

So what can we do? What World do we Want?

ICLA Students are building the tomorrow they want.